One of the coolest new features in vSphere 5 is the ability to use multiple network adaptors for vMotion traffic. This will work on Standard vSwitches and Distributed Switches. It does not require any special license. If you have vMotion licensed you can use the feature.

Prior to vSphere 5 vMotion traffic would only use one physical network adaptor. It did not help to add multiple adaptors and change load balancing to IP Hash and bond the physical nisc in an etherchannel. That meant the only way to increase vMotion throughput was to upgrade from 1Gb to 10Gb Ethernet! Not a plausible solution for everyone.

Configuration

In order to use this new feature you need to configure multiple vmkernel adaptors for vMotion. Actually you will need to configure one for each physical adaptor connected to the vSwitch.

In my example I want to use two physical adapters for vMotion (vmnic1 and vmnic2). First I create a new vSwitch and create two vmkernel adaptors for vMotion. The vmkernel ip addresses are in the same subnet.

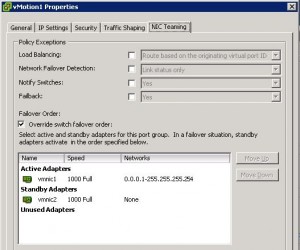

After creating the two vmkernel interfaces on the vSwitch we need to set “vMotion1” active on vmnic1 and standby on vmnic2. We will then set the “vMotion2” interface active on vmnic2 and standby on vmnic1.

Now you have 2Gb of throughput for your vMotion network. If you need more throughput just add more physical nics and create extra vmkernel interfaces.

Verifying it is working

To verify you have set it up correctly and you are using multiple nics for vMotion traffic do the following:

1. Select your ESXi host in the vCenter inventory.

2. Select the performance tab

3. Select advanced

4. Select “chart options”

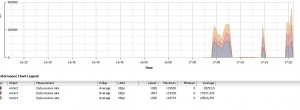

5. Then create a graph with “network” realtime and choose the nics you are using for vMotion. Either choose “data transmit rate” or “data receive rate” – make the graph stacked.

6. Start a vMotion and check that every nic is loaded.

Here is a screenshot of my enviroment. Look at the end of the graph. A vMotion is saturating three 1Gb adaptors! 🙂

Frank,

Good article! Question however. We currently run a single vSwitch that has 2 PG and 2 physical nics. One PG for Mgmt Network, the other PG for vMotion. In this design would it be possible to add a 3rd physical nic and configure fail over as follows:

vmk0 (Mgmt) – vmnic0 Active – vmnic1 and vmnic2 standby

vmk1 (vMotion1) – vmnic1 Active – vmnic2 Standby – vminc0 – Unused

vmk2 (vMotion2) – vminc2 Actvie – vmnic1 Standby – vmnic0 – Unused

Thanks in advance,

-Jason

Hi Jason, Thanks.

Yes you can configure it that way! No problem 🙂

Excellent Article, and quite useful as well…many thanks…!

Hi Frank,

did you know if there also only one adapter used if i use a etherchannel?

thanks

Sven

Yes only one adapter is used with etherchannel. Only way to use multiple adapters is multiple vmkernel ports.

thanks Frank for answer 🙂